Fine-tuning is a powerful process for utilizing TimeGPT more effectively. Foundation models such as TimeGPT are pre-trained on vast amounts of data, capturing wide-ranging features and patterns. These models can then be specialized for specific contexts or domains. With fine-tuning, the model’s parameters are refined to forecast a new task, allowing it to tailor its vast pre-existing knowledge towards the requirements of the new data. Fine-tuning thus serves as a crucial bridge, linking TimeGPT’s broad capabilities to your tasks specificities. Concretely, the process of fine-tuning consists of performing a certain number of training iterations on your input data minimizing the forecasting error. The forecasts will then be produced with the updated model. To control the number of iterations, use theDocumentation Index

Fetch the complete documentation index at: https://nixtla-docs-broken-links-fix.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

finetune_steps argument of the forecast method.

Tutorial

Step 1: Import Packages and Initialize Client

First, we import the required packages and initialize the Nixtla client.initialize-client

Step 2: Load Data

Load the dataset from the provided CSV URL:load-data

| timestamp | value | |

|---|---|---|

| 0 | 1949-01-01 | 112 |

| 1 | 1949-02-01 | 118 |

| 2 | 1949-03-01 | 132 |

| 3 | 1949-04-01 | 129 |

| 4 | 1949-05-01 | 121 |

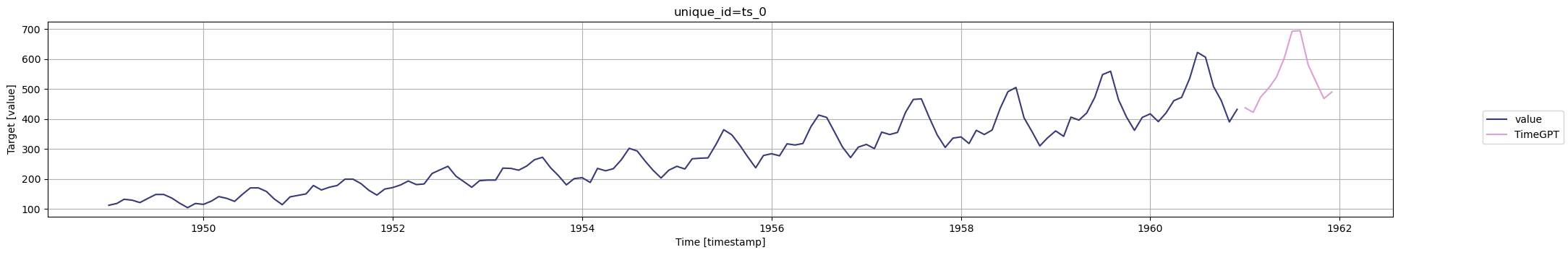

Step 3: Fine-tune the Model

Set the number of fine-tuning iterations with the finetune_steps parameter. Here,finetune_steps=10 means the model will go through 10 iterations of

training on your time series data.

Conclusion

Keep in mind that fine-tuning can be a bit of trial and error. You might need to adjust the number offinetune_steps based on your specific needs and the

complexity of your data. Usually, a larger value of finetune_steps works

better for large datasets.

It’s recommended to monitor the model’s performance during fine-tuning and

adjust as needed. Be aware that more finetune_steps may lead to longer

training times and could potentially lead to overfitting if not managed properly.

Remember, fine-tuning is a powerful feature, but it should be used thoughtfully

and carefully.

Additional Resources

- For a detailed guide on using a specific loss function for fine-tuning, check out the Fine-tuning with a specific loss function tutorial.

- Also, read our detailed tutorial on controlling the level of fine-tuning

using

finetune_depth.